Introduction

Web scraping is a method which is frequently used by content thieves to copy contents of a particular website. Because of web scraping it costs companies who do businesses online a whopping $3.6 billions to $30 billions in revenue according to “enterprisetimes” (Web scraping bots are 46% of web traffic), the SEO rank of the contents, integrity when the stolen contents are posted in multiple places across the web, and finally the conversion which is quite important when doing a business online, for instance, if the contents are posted across many domains for being scraped; the chances are that people have already seen them when visiting the original website, and thus leading to a lower conversion rate. With this in mind, it’s important to take proper safety precautions to protect the web contents from content thieves. There are many methods depending on the circumstance and the platform to take, and thus it’s difficult to cover all the methods in one article. So, this tutorial teaches how to prevent web scraping with Nginx with the help of geo module which is technically known as ngx_http_geo_module in nginx documentation. This may not be suitable for all the techniques used by scrappers, but still it gives a basic web scraping protection, and is quite good against known web scraping sites which are often used by amateurs to certain professionals to extract contents.

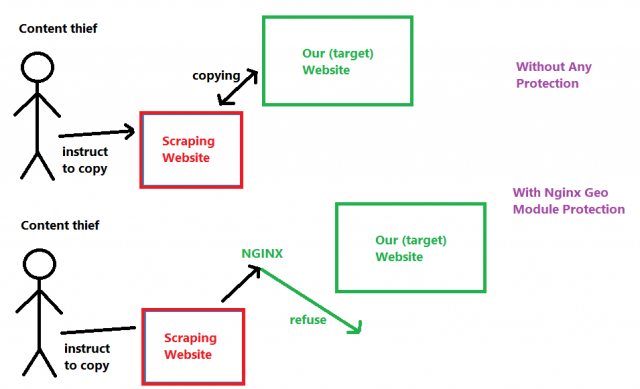

Mechanism

As seen in the following diagram which demonstrates how to stop web scraping, at first the content thief accesses to a scraping website to initiate the scraping, then the scraping website sends a request to the target website to download all the resources; once the resources are downloaded, the content thief can see them through the scraping website or even download them as an archive. Now that’s how it goes without any kind of a protection. However, if Geo module is installed and configured properly, the request sending by the scraping website is refused as soon as they are detected, and thus the contents in the target website aren’t stolen.

Code Snippet

Usually Nginx is compiled with the ngx_http_geo_module by default, if Ubuntu 14.04 or any onward version is installed as the operating system in the server. If it’s not installed, then use the following command in the SSH client with administrative privilege to install the ngx_http_geo_module module which is required for this tutorial.

sudo apt-get update sudo apt-get install nginx-extras

Open the FTP client, use the user credentials and access to the file server. If the website uses the default nginx file, the full path to the file is etc\nginx\sites-available\default. Open the file through any text editor such as Notepad++ or even the default Windows notepad is sufficient. Once the file is opened, add the following lines along with the offending IP addresses. Keep one line for the each record, don’t use “<” “>” when stating the IP address and its respective value, for instance 1.1.1.1 1;, and finally use geo code block outside of server {} block.

geo $offending_ips {

default 0;

<IP Address> 1;

}

The offending IP addresses can be found through the log file stated in each file in /etc/nginx/sites-available/ path with access_log directive, for instance if the default file is used, its server block is /etc/nginx/sites-available/default, access_log directory is stated as access_log /var/log/nginx/access.log, and therefore the default server block’s log file is readable with access.log stored in /var/log/nginx/ path. The full path to the default Nginx log is /var/log/nginx/access.log which is readable through SSH with this command.

watch tail -n 15 /var/log/nginx/access.log

Now, either in the default or the appropriate server block config file of Nginx, add the following code snippet. Here the $offending_ips variable holds value of the respective IP address, for instance if it’s mentioned as 1.1.1.1 1; it means the IP address is 1.1.1.1 and its respective value is 1. By evaluating the value in the following IF condition, the offending IP address’s request can be blocked. If the web server uses a WordPress installation, make sure to use the IF condition and its code block prior to the try_files as seen in the following snippet.

location / {

if ($offending_ips) {

return 404;

}

try_files $uri $uri/ /index.php$is_args$args;

}